Serverless A word which is been going up and down in the last few years all around the globe in the Tech world. But I’ve been asking my self is the right time to go Serverless? The answer is YES

Let’s understand Why?. Why should companies need to evolve to Serverless? But before that, we would start with questions like what?, When?, How?.

What is Serverless?

Serverless computing (or serverless for short), is an execution model where the cloud provider (AWS, Azure, or Google Cloud) is responsible for executing a piece of code by dynamically allocating the resources. And only charging for the number of resources used to run the code.

It’s an architecture pattern in which we as a user don’t need to worry about underlying infrastructure to run our code. Our Application would run independently from the infrastructure requirements. We would have to define the memory requirement to run the Applications and the rest would be taken care of by the infrastructure provider.

Why Go Serverless?

Going Serverless would bring a lot of benefits and that would enable to augment a lot of problems that usually exist in the Application Designs.

There are many benefits of going serverless but let’s talk about 4 major things which should enable us to go and use Serverless.

- No Server Management: As a new startup would you rather like to work on developing features or worry about designing infrastructure for your application? If you are an enterprise How many application features or new product ideas are kept in the pipeline because they will not meet the infrastructure demands? With Serverless both things would get resolved since you would not have to get involved in any kind of Server Management.

- High Availability: Designing the overall application which would again be augmented from the infrastructure. In the case of Serverless Infrastructure, the infrastructure availability remains with the Cloud provider and they ensure the high availability of your application and serving the requests without any downtime.

- Flexible Scaling: When we are designing the Application using Serverless Design patterns, we do not have to worry about sizing the traffic and reserving the capacity according to the traffic as the Cloud provider would be managing scaling up or down their infrastructure based on the traffic on the application which is deployed.

- No Idle Pricing One of the main challenges in the current cloud infrastructure design is that no matter who’s using the application you would be burning your servers all the time based on the maximum project capacity. With Serverless design that gets out of the door since we would be paying only if someone is using our application if not then charges would be Zero as a cloud provider is not reserving any infrastructure to run our applications.

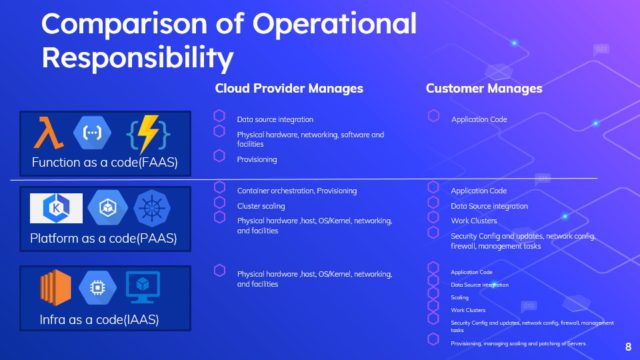

Comparison of Operational Responsibility

Here is a glimpse of the operational responsibility of various design patterns that we can follow, It would help you to decide on which pattern to go ahead with?

If you are going to use standard runtime environments like Go,Java,Node.js or Python and creating an event-driven product you should consider using Serverless which would reduce your time to market.

Good & Bad of Serverless Design

Now, Question is that what are the pros and cons of using Serverless Design. We’ve already talked about the Good points earlier, let’s talk about the things that don’t work well in Serverless designs and How can we mitigate them.

- Vendo lock-in The serverless infrastructure of the public cloud providers is currently very specific. If you are using AWS for your serverless design there are no straight migration procedures to move it to other cloud providers. You could use design patterns like Serverless for easier migration in case you want to avoid Vendor Lock-in.

- Testing Locally Since the Applications need to run on a public cloud provider’s environment, there is no direct approach to run the whole stack locally. That’s one of the limitations of Serverless Design in which testing locally is difficult. The same can be solved by using a separate GitHub plugin which would allow you to deploy the stack locally as well.

- Performance Serverless environments come with their limitations which would stop you to run your application for more than 15 minutes for one invocation and memory can also be allocated up to 3 GB. You can read more about lambda limitations here. You can design your microservices in a way that they can get served within these limitations.

- Observability In Serverless environments Observability is one of the main challenges to ensure that once the Application is deployed you have enough monitoring capability to monitor the Applications. Traditional monitoring tools at this point in time don’t give native monitoring for Serverless Applications. We have been trying to solve this for some time and comparing options for monitoring our Serverless Applications. We have been using Thundra for quite a few months now. You can also start using Thundra from here.

- Cold Starts Serverless Infrastructure is an On-Demand infrastructure which means that it’s not always on. There are a few milliseconds of delay in setting up the runtime environment to serve the requests. Many ways are using which the Cold Start timings can be reduced or even avoided.

Conclusion

In case you are looking for a way to migrate your existing applications to Serverless Infrastructure, we at DataVizz help an enterprise to create an enterprise migration strategy to migrate your product to a Serverless application stack.